Facebook – The Algorithm on Trial

Three courtrooms, one corporation, and the legal reckoning that could reshape the internet

By Robert Nogacki | March 2026

In October of 2023, the attorneys general of forty-one American states filed a two-hundred-and-thirty-three-page complaint against Meta Platforms, Inc. What made it unusual—what made it, in certain respects, historic—was not the gravity of the charges but the identity of the prosecution’s most important witness. The complaint was built almost entirely from Meta’s own words: its internal research, its patents, its executives’ congressional testimony, its engineers’ private e-mails. The corporation as its own star witness. Simultaneously, two jury trials were underway—one in Santa Fe, one in downtown Los Angeles—while a federal appeals court in San Francisco was being asked to decide a question that would determine whether any of it could proceed at all. What follows is an attempt to map the whole battlefield.

I. Three Fronts

To understand what is at stake in the litigation now unfolding across American courtrooms, it helps to keep the three proceedings distinct. They share a defendant, a set of underlying facts, and a moral architecture. But they differ in legal theory, in plaintiff, and in what they are actually trying to accomplish.

Santa Fe: The Undercover Operation

In early 2026, a trial opened in Santa Fe before a New Mexico jury—the first standalone state proceeding to put Meta before twelve citizens on claims of child sexual exploitation facilitated by the platform’s own algorithms. New Mexico’s attorney general, Raúl Torrez, had prepared for it in an unusual way. His office ran an undercover investigation, code-named Operation MetaPhile, in which investigators created a fictitious account for a thirteen-year-old girl named Issa Bee on Facebook. Within weeks, the account had accumulated five thousand friends and more than sixty-seven hundred followers—nearly all adult men, concentrated in Nigeria, Ghana, and the Dominican Republic. Meta’s recommendation algorithm kept amplifying the account’s reach. The platform, for its part, suggested that Issa Bee set up a professional account and begin monetizing her audience. The investigation ran multiple decoy accounts; three individuals were arrested after appearing in person believing they were meeting underage children.

No actual thirteen-year-old was involved. Everything the platform did, however, was entirely real. The state’s legal theory was constructed to sidestep Section 230 of the Communications Decency Act—the federal statute that has long shielded internet companies from liability for what their users post—by arguing not that Meta was responsible for harmful content but that the platform’s design choices constituted a defective product and that Meta had affirmatively lied to regulators about the scope of the problem. Neither theory is touched by Section 230.

Los Angeles: The Billionaire on the Stand

In a courtroom in downtown Los Angeles, a different trial had been under way since early February. Adam Mosseri, the head of Instagram, testified on February 11th before Zuckerberg’s appearance. This one is a bellwether—a single test case selected from a pool of more than sixteen hundred lawsuits filed by parents against Meta, Google (which owns YouTube), Snap, and TikTok. TikTok and Snap have already settled their claims, confidentially. Meta and Google remain as defendants.

The plaintiff, identified in court papers as Kaley G.M.—she is twenty years old now, and her last name is being withheld—began using Instagram when she was nine. On the morning of February 18th, she sat in the front row of the courtroom and watched as Mark Zuckerberg, one of the five wealthiest people on the planet, settled into the witness chair. He was there as an adverse witness, which is a legalism meaning that the people questioning him were not on his side. He wore a dark-blue suit and a gray tie. He looked pale and quietly irritated, according to reporters in the room, as the plaintiff’s attorney, Mark Lanier—a Texas lawyer who made much of his reputation suing Purdue Pharma—worked through the evidence, item by item.

The exchanges that followed were, by turns, revealing and remarkable. Lanier introduced documents showing that four million children under the age of thirteen had Instagram accounts in 2015, including roughly thirty per cent of all American children between the ages of ten and twelve. He produced an e-mail in which Zuckerberg expressed hope that the time his users spent on the app would increase by twelve per cent over the next three years. He displayed internal documents from 2022 that spelled out what Meta called “milestones”: forty minutes of daily use per active user in 2023, forty-two in 2024, forty-four in 2025, forty-six in 2026. And he quoted a self-review written by Adam Mosseri, the head of Instagram, in which Mosseri listed his primary objective as ensuring that the app remain “culturally relevant as measured by session time and sharing, particularly with teens.”

Zuckerberg insisted, patiently and repeatedly, that these were not goals. They were milestones. “You don’t give them goals, you give them milestones,” Lanier said, with the faint mockery of a man who has cross-examined more than a few executives. “That’s wrong,” Zuckerberg answered flatly. “You’re mischaracterizing the document and what I said.” The jury—twelve ordinary people who almost certainly carry Instagram on their phones—took that in.

San Francisco: The Question That Decides Everything

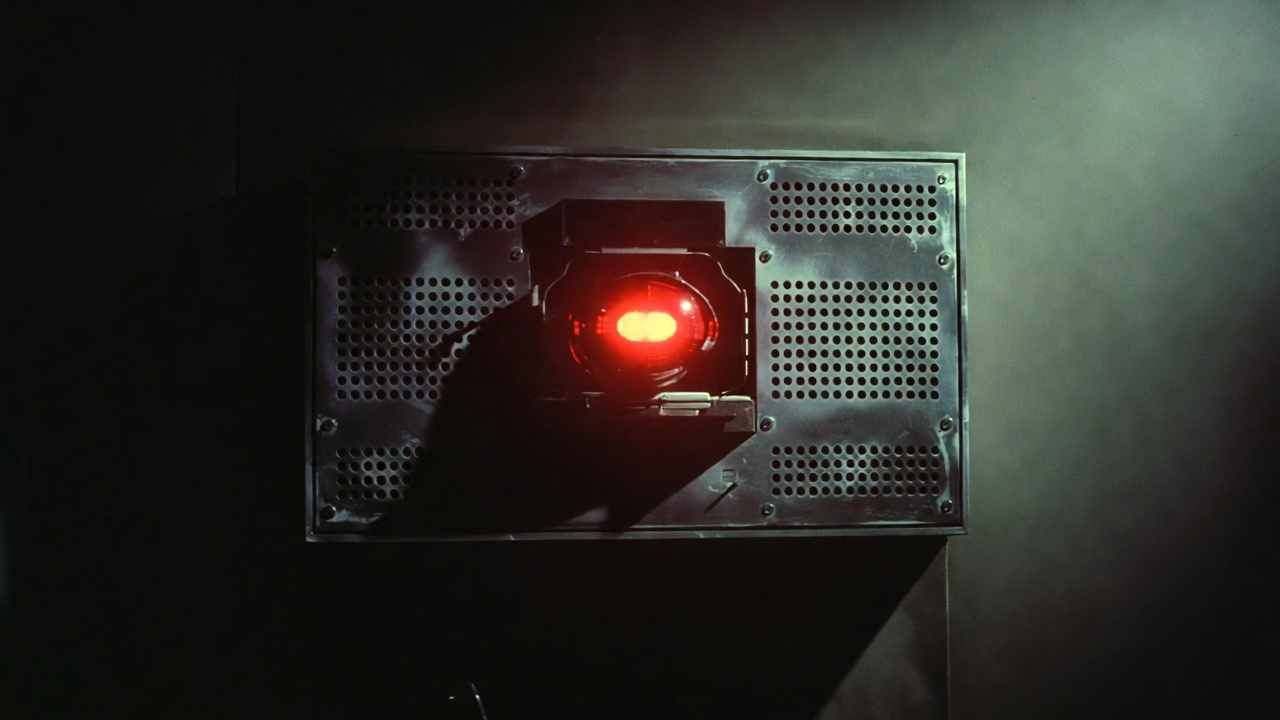

The third proceeding is in some respects the most consequential, though it has generated far less press coverage than Zuckerberg’s testimony. In the Ninth Circuit Court of Appeals in San Francisco, Meta has challenged a district court ruling that refused to dismiss the forty-one-state complaint on Section 230 grounds. The case number is 24-7032, and the question before the court is precise: does Section 230 protect a platform from liability for its own design decisions—its infinite scroll, its engagement-maximizing algorithms, its notification architecture—or does the statute’s protection extend only to content that users themselves create?

In June of 2025, a coalition of civil-liberties organizations and legal scholars filed an amicus brief in support of the states. Its signatories included Danielle Keats Citron, of the University of Virginia; Frank Pasquale, of Cornell University; and Neil Richards, of Washington University in St. Louis—some of the foremost authorities on platform law in the country. Their brief is, in effect, the intellectual architecture of all three proceedings.

II. The Shield That Wasn’t

To appreciate why the Ninth Circuit case matters so much, it helps to understand where Section 230 came from—and, more importantly, what it was never meant to do.

In 1995, a New York court decided a defamation case involving an online bulletin board called Prodigy Services. Prodigy moderated its boards—it banned obscenity and enforced community guidelines—and the court ruled that this editorial activity made Prodigy a publisher, in the legal sense, rather than a distributor. Publishers, unlike distributors, are liable for everything they communicate, including content they didn’t write. The implication was alarming: any platform that chose to moderate user content could be held responsible for all user content it failed to catch. Congress recognized this as a trap and moved quickly. Section 230, passed in 1996, established that internet platforms are not publishers of their users’ content, regardless of whether they moderate.

The problem, the amicus brief argues, is that what Congress enacted as a scalpel has been wielded for thirty years as a bludgeon. The statute was designed to solve one specific problem—what the brief calls the “moderator’s dilemma”—and nothing else. The dilemma, in its simplest form, is this: if moderating your platform makes you liable for everything you don’t moderate, you will either stop moderating (and let the worst content flourish) or stop hosting users at all. Section 230 removed that particular liability. It was not intended to immunize platforms from the consequences of their own engineering choices.

The Test

The Ninth Circuit has developed a two-part test for determining when Section 230 applies, refined through cases running from Barnes v. Yahoo! (2009) through Lemmon v. Snap (2021) to Calise v. Meta (2024). First: would satisfying the duty alleged in a lawsuit require a platform to monitor, edit, or remove user-generated content? Second: does the duty arise from the platform’s decision to host or moderate that content, or from something else entirely? Only if both answers are yes does Section 230 shield the defendant.

The controlling precedent is Lemmon v. Snap, decided by the Ninth Circuit in 2021. Snap had built a filter that let users display their speed on videos—a feature that teenagers, being teenagers, used while driving. Several died. The court held that a product-liability claim for defective design was not barred by Section 230, because the duty to design a safe product does not arise from a company’s decision to host user content. It arises from its role as a manufacturer. The court’s core reasoning: those acting solely as publishers bear no comparable duty.

This is the theory that animates all three Meta cases. The amicus brief crystallizes it with characteristic bluntness:

“Section 230 is not a get-out-of-jail-free card. It is a scalpel to be used on a specific type of speech-endangering claim—one that is not at issue in this case.” — EPIC Amicus Brief, No. 24-7032, June 30, 2025

Six Design Decisions That Section 230 Cannot Protect

The forty-one-state complaint attacks Meta on six distinct design fronts. None involves the content that users post. Each involves a choice Meta made as a product designer—and each, the states argue, represents an unfair or deceptive trade practice that a jury should be permitted to evaluate on its merits.

Infinite scroll and autoplay. Meta patented a mechanism for delivering content in an unbroken stream—a feed that never runs out, that updates in real time as the user scrolls, that begins playing the next video before the previous one has ended. Both features eliminate what designers call “friction points”: the natural pauses at which a person might decide to stop. A fix would not require Meta to touch a single piece of user-generated content. It would simply require restoring the pagination that predated infinite scroll, or requiring users to press play.

Engagement-maximizing recommendation algorithms. Facebook migrated from a chronological feed to an engagement-based one in 2009; Instagram followed in 2016. The algorithm does not rank content by its informational value or even by the user’s stated preferences. It ranks content by its predicted likelihood of generating the behaviors—comments, shares, lingering glances—that keep users on the platform longest. The complaint documents internal “milestones” for steadily increasing average session time through 2026. The alternative—optimizing for relevance to the user rather than for the platform’s revenue objectives—would require no content removal whatsoever.

Appearance-altering filters. Instagram offers tools that modify photographs to simulate the effects of cosmetic surgery: slimming jawlines, enlarging eyes, smoothing everything. An internal Meta panel of eighteen experts—confirmed by Zuckerberg under oath during the Los Angeles trial—recommended banning them. Meta declined, invoking principles of free expression. Its own internal research linked the filters to body dysmorphia in teenage girls. Meta banned them temporarily, then brought them back.

Notification systems engineered for psychological vulnerability. Meta patented an algorithm that determines the optimal moment to send a user a notification—not when it’s convenient for the user, but when the user is most likely to click. The system analyzes location, click history, and response patterns; it withholds notifications when the user starts ignoring them, then resumes at a more receptive moment. Teenagers receive, on average, two hundred and thirty-seven notifications a day. Turning them all off at once is not possible; they must be disabled category by category.

Ephemerality as an engine of FOMO. Stories disappear after twenty-four hours. Meta introduced the feature in 2016, explicitly to compete with Snapchat, and its own internal research documents that more than half of teenage Instagram users experience what psychologists call “fear of missing out.” A 2019 internal presentation concluded that young people were “acutely aware that Instagram can be bad for their mental health, yet are compelled to spend time on the app for fear of missing out on cultural and social trends.”

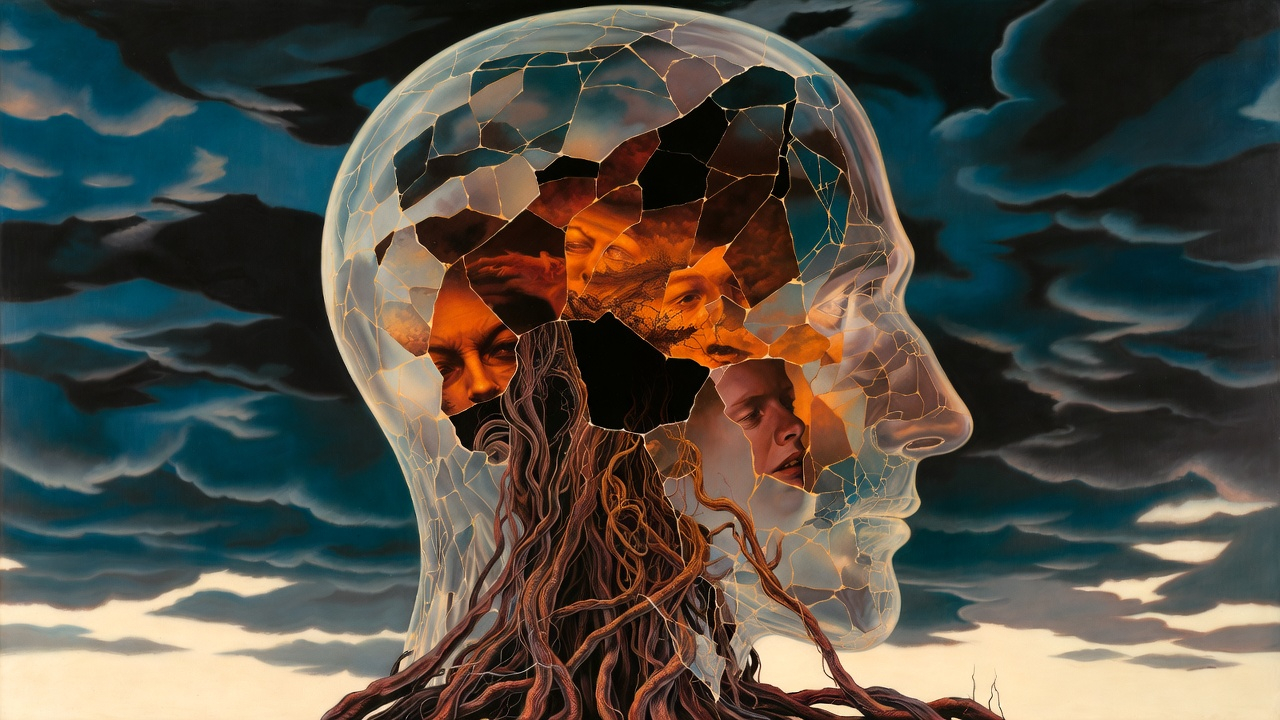

Intermittent variable reinforcement schedules. The term comes from behavioral psychology—from Skinner, not from Silicon Valley—and it describes the most powerful known mechanism for sustaining compulsive behavior: a reward that appears at unpredictable intervals. Slot machines operate on the same principle. A Meta employee wrote in an internal document cited in parallel proceedings before the same court: “Intermittent rewards are most effective (think slot machines), reinforcing behaviors that become especially hard to extinguish.” The document was describing a design goal.

III. The Company as Its Own Witness

If legal theory is the skeleton of this litigation, Meta’s internal documents are its flesh. The forty-one-state complaint is an extraordinary piece of legal drafting not because it makes sweeping accusations—many lawsuits do that—but because nearly every accusation it makes is sourced to something Meta wrote itself.

The Founder’s Confession

In 2017, Sean Parker—the first president of Facebook, and one of the original architects of its growth strategy—gave an interview that, had it been sworn testimony, might have served as the complaint’s opening exhibit:

“The thought process that went into building these applications, Facebook being the first of them, was all about: How do we consume as much of your time and conscious attention as possible? That means that we need to sort of give you a little dopamine hit every once in a while, because someone liked or commented on a photo or a post or whatever. And that’s going to get you to contribute more content and that’s going to get you more likes and comments. It’s a social-validation feedback loop—exactly the kind of thing that a hacker like myself would come up with, because you’re exploiting a vulnerability in human psychology.” — Sean Parker, Axios, November 2017

Parker was not a disgruntled former employee speaking to a journalist. He was describing a design philosophy that, the complaint argues, has remained operative at Meta ever since. He was proud of it, in the slightly unsettling way that engineers sometimes are when they explain how a particularly elegant machine works.

A “Valuable but Untapped Audience”

The complaint documents that Meta described children between the ages of ten and twelve as a “valuable but untapped audience.” The company assembled an internal team to study this demographic. It commissioned strategy papers analyzing the long-term business opportunities that preteens represented. It planned to launch Instagram Kids—a separate app for children under thirteen—and abandoned the project only after attorneys general from multiple states and members of Congress raised alarms. Research by the organization Thorn, cited in the complaint, found that forty-five per cent of children aged nine to twelve used Facebook daily, and forty per cent used Instagram daily. Meta had access to these numbers.

On Capitol Hill, meanwhile, Antigone Davis, Meta’s global head of safety, testified on September 30, 2021, that the company did not “build” its platforms “to be addictive.” In July of 2018, Meta told the BBC that “at no stage does wanting something to be addictive factor into” the company’s design process.

The Prevalence Trick

Every quarter, Meta published what it called a Community Standards Enforcement Report—a document asserting that content violating the platform’s rules represented a fraction of a fraction of a per cent of all views. Zuckerberg described this “prevalence” metric to Congress as a model of corporate transparency. Meta amplified the reports through press releases and conference calls with journalists.

Simultaneously, safety engineer Arturo Béjar was running an internal study called the Bad Experiences and Encounters Framework—known inside the company as BEEF—which surveyed approximately two hundred and forty thousand users and found that fifty-one per cent of Instagram users had experienced a negative or harmful event in the previous seven days, a figure that climbs above fifty-four per cent for teenagers aged thirteen to fifteen. The two figures were compiled at roughly the same time. One was public. One was not. They are not contradictory, technically speaking: prevalence measures the share of harmful views as a proportion of all views, not the share of users who encounter harm. This is the statistical equivalent of a tobacco company noting, accurately, that the proportion of carcinogenic puffs to all puffs taken is vanishingly small.

Project Daisy and the Choice Not to Act

Meta’s engineers ran a project called Daisy to test what would happen if the platform stopped showing users the number of likes on posts. The hypothesis was that visible like counts—a precise, public measure of social approval, updated in real time—drove unhealthy social comparison and anxiety, especially among teenage girls. The results suggested that hiding like counts was beneficial for a meaningful portion of users.

Meta did not make hiding like counts the default. It made the option available—buried in a submenu, requiring users to actively seek it out and turn it on. In a blog post published in May of 2021, the company explained that it hadn’t made the change a default because “not seeing like counts was beneficial for some, and annoying to others.” The complaint documents that this explanation was misleading. The company’s own “Teen Mental Health Deep Dive”—a survey of more than twenty-five hundred teenagers in the United States and the United Kingdom—found that one in five teenagers said Instagram made them feel worse about themselves, and that twenty-four per cent traced feelings of not being “good enough” specifically to their time on the app. Meta filed this research internally and continued to show like counts by default.

Molly Russell

A fourteen-year-old girl in the United Kingdom, Molly Russell, died in November 2017 after Instagram’s recommendation algorithm had, over a period of weeks, served her a sustained diet of content related to self-harm and suicide—content she had not searched for. Meta sent Elizabeth Lagone, its head of health and wellbeing policy, to the coroner’s inquest to testify that such content was “safe” for children to see. The coroner rejected the claim. His report, issued in October of 2022, concluded that Molly “died from an act of self-harm whilst suffering from depression and the negative effects of on-line content” that had been selected and delivered to her by the platform’s algorithms without her request:

“The platform operated in such a way, using algorithms, as to result, in some circumstances, in binge periods of images, video clips and text, some of which were selected and provided without requesting them. These binge periods … are likely to have had a negative effect on the mental health of Molly.” — Coroner’s Prevention of Future Deaths Report, October 13, 2022

This was an independent legal proceeding in a different country, conducted under different evidentiary rules. It reached the same conclusion as the complaint filed by forty-one American states.

The Epidemiology

The complaint weaves the internal documents together with data from the Centers for Disease Control and Prevention. In 2011—the year before Instagram’s user base began its steep ascent—approximately nineteen per cent of American high-school girls reported having seriously considered attempting suicide. By 2021, that figure was thirty per cent. In the same decade, the rate at which high-school girls actually attempted suicide rose by roughly thirty per cent. Research by Jean Twenge and others, drawing on CDC trend data, has also documented a sharp spike in suicide rates among younger adolescents in the years immediately following Instagram’s surge in popularity, though the CDC’s biennial YRBS methodology makes it difficult to attribute precise single-year figures to specific age cohorts with confidence.

Correlation is not causation, and the complaint does not pretend otherwise. What it argues is that this epidemiological pattern, combined with internal documents showing that Meta knew about the harm, knew that its design choices were contributing to it, and chose—systematically and at every level of the organization—not to address it, constitutes something more damning than negligence. Arturo Béjar, a former director of integrity at the company, testified before the Senate in November 2023 that Zuckerberg had ignored his personal appeals for a “culture shift” on teen safety. Meta, Béjar said, “knows about harms that teenagers are experiencing in its product, and they’re choosing not to engage about it or do meaningful efforts around it.”

IV. The Pattern

The tobacco parallel has become a commonplace in coverage of this litigation, and the opioid parallel is close behind. It is worth pausing to explain why both comparisons are precise rather than merely rhetorical. The tobacco industry knew, by the early nineteen-fifties, that cigarettes caused cancer. The Master Settlement Agreement—the comprehensive legal resolution of state claims against the major tobacco companies—came in 1998: roughly fifty years after that knowledge became internal corporate fact.

Purdue Pharma understood OxyContin’s addictive potential by the late nineteen-nineties. The original Sackler settlement was rejected by the United States Supreme Court in June 2024. A new seven-point-four-billion-dollar agreement was reached in principle in early 2025 and approved by states in June of that year, though final court approval remained pending at the time this article went to press—roughly a quarter-century after the harm first became undeniable.

In both cases, the mechanism was identical: internal research confirming the harm; aggressive marketing targeted at the most vulnerable populations; regulatory lobbying to forestall oversight; public denials calibrated to preserve plausible deniability; and, eventually, a financial settlement that proved profitable for the company’s owners relative to the scale of the damage done. Mark Lanier, who is now pressing Zuckerberg for answers in a Los Angeles courtroom, worked on the opioid cases. He knows the script.

The difference—and it is a difference that cuts against Meta—is one of speed and scale. Tobacco and opioids worked slowly, on adults, without the ability to achieve instantaneous global reach. An algorithm operates in real time, at planetary scale, with particular intensity on developing brains that are, by definition, least equipped to resist it. The mental-health data began diverging from its baseline in 2012. That is the year the smartphone became a mass-market product. It is also the year Meta acquired Instagram for a billion dollars.

In 2017, Chamath Palihapitiya—Facebook’s vice-president of user growth—announced publicly that he had forbidden his own children from using the platform he had helped build. That same year, Facebook had two billion users. The people who designed this product knew that they did not want their own children exposed to it. They built it anyway—because someone else’s children were always more available than their own.

V. Where Things Stand

The Ninth Circuit: The Decision That Frames Everything Else

The appeal in No. 24-7032 is the least glamorous of the three proceedings and almost certainly the most important. If the Ninth Circuit holds that Section 230 bars the states’ design-liability claims, the forty-one-state complaint collapses. If it holds—as the amicus brief argues it should—that Section 230 applies only to claims that would force a platform back into the moderator’s dilemma, the cases proceed to the merits.

The circuit’s recent precedents run in one direction. Lemmon (2021), Calise v. Meta (2024), HomeAway.com v. City of Santa Monica (2019), Doe v. Internet Brands (2016), Barnes v. Yahoo! (2009)—each, in its own way, has declined to extend Section 230 beyond its historical purpose. None of the design choices at issue in the Meta cases requires Meta to remove a single word of user-generated content. They require Meta to change its own engineering decisions. Section 230 was never meant to protect those.

Los Angeles: The Bellwether

Closing arguments concluded on March 12th; as of this writing, the jury remains in deliberation. A verdict in the Kaley G.M. case will set the terms for the sixteen hundred cases that follow. A substantial damages award will bring both sides to the table for a global settlement, almost certainly in the billions, modelled on the tobacco and opioid resolutions. A defense verdict will sharply reduce the settlement value of the remaining cases, though it will not end the litigation entirely.

What a verdict in either direction will not do is resolve the legislative question. Congress has been trying to amend Section 230 for more than a decade. Every bill has died in committee, killed by a combination of tech-industry lobbying and a genuine constitutional dispute about what the First Amendment permits. Courts can impose financial liability. They cannot rewrite the statute. This is why the Ninth Circuit proceeding matters more than the jury trial, even if it will never produce a scene as arresting as Zuckerberg in the witness chair.

Santa Fe: A Methodological Precedent

The New Mexico case is the most procedurally adventurous of the three. Rather than relying on internal documents and whistleblower testimony, the state built its case through a real-time undercover operation, documenting what Meta’s algorithms actually do when an account that looks like a thirteen-year-old girl appears on the platform. It is a methodology borrowed less from consumer-protection law than from organized-crime prosecution.

Meta’s defense has attacked the ethics of the investigation: the state’s investigators used false birthdates to circumvent the platform’s teen-safety features, and they used photographs of a real woman to establish the cover identity. The platform, Meta argued, cannot be faulted for failing to detect deception that was deliberately engineered to defeat its safeguards. The state’s implicit response is the obvious one: any ten-year-old with internet access and a passing familiarity with arithmetic can do exactly what the investigators did. Meta has always known this.

As of March 15, 2026, the trial remains ongoing before a Santa Fe jury. Prosecutors have been presenting video depositions of Zuckerberg and Mosseri; the case is expected to conclude around late March.

VI. The European Mirror

These proceedings are taking place in American courts, but their implications are global—and nowhere more immediately than in Europe, where the Digital Services Act has been in full effect since 2024. The D.S.A. represents a fundamentally different regulatory philosophy than the one embedded in American law. Where Section 230 is reactive—establishing, essentially, that platforms are not liable for what happens on them—the D.S.A. is preventive. It imposes affirmative obligations on the largest platforms before harm occurs. Article 27 requires transparency about how recommendation systems work. Article 35 mandates that platforms identify and mitigate systemic risks, including risks to users’ mental health. Article 28 prohibits behavioral profiling of children for advertising purposes.

If the American litigation produces judgments or settlements confirming that Meta knew about specific harmful design mechanisms and chose not to address them, that factual record will be available to the European Commission in its D.S.A. enforcement proceedings. Under the D.S.A., knowingly permitting a systemic risk to persist is not merely an ethical failure; it is the foundation for administrative penalties of up to six per cent of global annual revenue. The documents that American discovery has unearthed will not stop at national borders.

The two regulatory models—American tort liability and European administrative oversight—are often described as competing approaches to the same problem. They are better understood as complementary. The D.S.A. tries to prevent harm by imposing procedural obligations before damage is done. American product liability tries to compensate harm after the fact and, through the deterrent effect of large damage awards, change corporate behavior going forward. Each model has weaknesses the other partially corrects. Neither, on its own, is sufficient. Together, they might constitute something close to an adequate response.

VII. Four Conclusions

First: product-liability theory for digital platforms is now mature. Lemmon v. Snap was not an outlier. It was a data point in a developing line of authority that has, since 2016, consistently refused to extend Section 230 beyond its historical purpose. Every major digital platform—not merely in the United States but globally, given the tendency of federal circuit precedents to shape standards internationally—should reassess which of its engineering choices could form the basis of liability claims, regardless of what Section 230 does or doesn’t say.

Second: the coalition of forty-one states is a model, not an episode. Where Congress cannot or will not act, states are making new law through litigation. The model—coordinated state-level suits, a coherent legal theory, internal corporate documents obtained through discovery—is replicable. European regulators, Australian consumer-protection authorities, and prosecutors in any jurisdiction with analogous statutory instruments are watching.

Third: what the complaint describes as Meta’s conduct has a name in the law, and it is not negligence. Negligence is failing to take adequate precautions. What the documents describe is a corporation that conducted detailed internal research confirming the harm its products caused, publicly denied that research, engineered the platform’s ostensible safety tools to be ineffective, and deployed neuroscientific knowledge about the developing adolescent brain to make its products more difficult for adolescents to resist. The tobacco industry took fifty years to be held accountable for that pattern. The opioid industry took roughly twenty-five. The interval is shrinking.

Fourth, and finally: a corporation that knew and chose not to act does not deserve immunity, whatever the statute says. The shield that Congress erected in 1996 was designed to protect good-faith decisions made under genuine uncertainty. It was not designed to protect knowledge combined with systematic inaction. Section 230 is a scalpel meant for one specific cut. For three decades, the technology industry has carried it as a broadsword. The three proceedings described in this piece are an attempt, conducted simultaneously on three legal fronts, to restore the instrument to its original dimensions.

On the morning of February 18th, Kaley G.M. sat in the front row of a Los Angeles courtroom and watched the man whose algorithm had shaped her childhood explain to a jury the difference between goals and milestones. She had been nine years old when she first opened Instagram. She is twenty now.

Somewhere in the court record—in the two hundred and thirty-three pages of the multistate complaint, or in one of the hundreds of internal documents that Meta’s lawyers have spent years trying to keep sealed—is a finding from the Teen Mental Health Deep Dive, a 2019 internal study first surfaced by the Wall Street Journal in September 2021, in which Meta’s own researchers recorded teenagers describing their relationship to the app almost in the language of an addict. “I feel bad when I use Instagram,” one teenager told the researchers, “and yet I can’t stop.”

Meta commissioned that research. It is, in the end, the company’s most honest product review.

Robert Nogacki

Attorney at Law (WA-9026), Managing Partner, Kancelaria Prawna Skarbiec, Warsaw

The views expressed are the author’s own and do not constitute legal advice.

Sources:

Complaint for Injunctive and Other Relief, Case 4:23-cv-05448-YGR (41 states v. Meta, N.D. Cal., Oct. 24, 2023) | EPIC et al. Amicus Brief, No. 24-7032 (9th Cir. June 30, 2025) | Lemmon v. Snap, 995 F.3d 1085 (9th Cir. 2021) | Calise v. Meta, 103 F.4th 732 (9th Cir. 2024) | Stratton Oakmont v. Prodigy Services, 1995 WL 323710 | Coroner’s Prevention of Future Deaths Report re Molly Russell (Oct. 13, 2022) | Arturo Béjar, Senate Testimony (Nov. 2023) | Frances Haugen, Whistleblower Disclosures (Sept.–Oct. 2021) | CDC Youth Risk Behavior Survey 2011–2021 | Sean Parker, Axios (Nov. 2017) | Jean Twenge, “Increases in Depression, Self-Harm, and Suicide Among U.S. Adolescents After 2012,” Psychiatric Research and Clinical Practice (2020) | Georgia Wells, Jeff Horwitz & Deepa Seetharaman, “Facebook Knows Instagram Is Toxic for Teen Girls”, Wall Street Journal (Sept. 14, 2021) | CCDH, “7 Ways Meta Is Harming Kids” (March 2024) | New Mexico AG Press Release, Operation MetaPhile (Dec. 5, 2023) | Zuckerberg Testimony, CNN (Feb. 18, 2026) | Mosseri Testimony, AP News (Feb. 11, 2026) | Closing Arguments, PBS NewsHour (March 12, 2026) | Meta NM Trial, Wired | NM Video Depositions, AP/US News (March 3, 2026) | Frank Pasquale, Cornell DLI

Robert Nogacki – licensed legal counsel (radca prawny, WA-9026), Founder of Kancelaria Prawna Skarbiec.

There are lawyers who practice law. And there are those who deal with problems for which the law has no ready answer. For over twenty years, Kancelaria Skarbiec has worked at the intersection of tax law, corporate structures, and the deeply human reluctance to give the state more than the state is owed. We advise entrepreneurs from over a dozen countries – from those on the Forbes list to those whose bank account was just seized by the tax authority and who do not know what to do tomorrow morning.

One of the most frequently cited experts on tax law in Polish media – he writes for Rzeczpospolita, Dziennik Gazeta Prawna, and Parkiet not because it looks good on a résumé, but because certain things cannot be explained in a court filing and someone needs to say them out loud. Author of AI Decoding Satoshi Nakamoto: Artificial Intelligence on the Trail of Bitcoin’s Creator. Co-author of the award-winning book Bezpieczeństwo współczesnej firmy (Security of a Modern Company).

Kancelaria Skarbiec holds top positions in the tax law firm rankings of Dziennik Gazeta Prawna. Four-time winner of the European Medal, recipient of the title International Tax Planning Law Firm of the Year in Poland.

He specializes in tax disputes with fiscal authorities, international tax planning, crypto-asset regulation, and asset protection. Since 2006, he has led the WGI case – one of the longest-running criminal proceedings in the history of the Polish financial market – because there are things you do not leave half-done, even if they take two decades. He believes the law is too serious to be treated only seriously – and that the best legal advice is the kind that ensures the client never has to stand before a court.