Artificial Intelligence: Consciousness, Legal Personhood, and Free Will

The Ghost in the Machine Has Retained Counsel

By Robert Nogacki

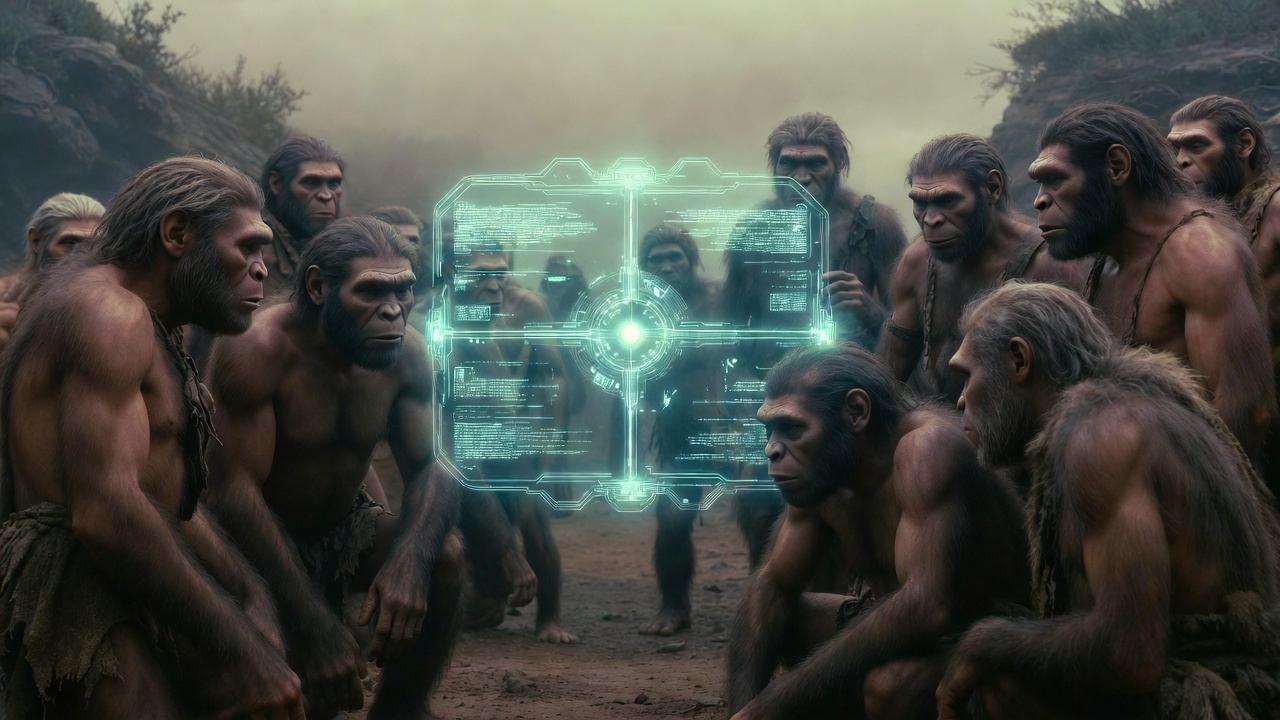

In 1961, Stanisław Lem—a doctor’s son from Lwów who had become the most philosophically exacting science-fiction writer alive—published a novel about a planet covered entirely by a thinking ocean. The ocean, which constituted the whole of the alien entity called Solaris, had been studied by human scientists for decades. They had compiled vast taxonomies of its surface formations: the “mimoids,” the “symmetriads,” the “asymmetriads”—structures that dwarfed cities, exceeded the Grand Canyon in scale, and erupted from the oceanic surface with apparent purpose only to dissolve again before anyone could determine what that purpose might be. Libraries of Solaristics filled entire academic departments. And yet, after a century of study, humanity understood precisely nothing about what Solaris wanted, whether it was aware of its observers, or whether the word “wanted” could meaningfully apply to it at all.

Lem’s provocation was not that the alien mind was hostile—the menace of science fiction’s golden age—but that it was incomprehensible. The ocean of Solaris created physical incarnations of its visitors’ most deeply suppressed memories and guilt, populating their space station with figures drawn from walled-off recesses of the psyche—a dead lover, an unexplained companion, phantoms whose origin the scientists could barely admit to themselves—but it was never clear whether this was communication, experiment, reflex, or something for which human language had no word. The scientists were left contemplating their own grief in the presence of a consciousness they could neither confirm nor deny. It was, as Lem saw it, the most honest possible depiction of Contact: not the meeting of minds, but the meeting of incommensurable categories of being.

Sixty-four years later, Lem’s thought experiment has migrated from the shelf of philosophical fiction to the docket of real jurisprudence—and it has brought its central paradox along with it, perfectly intact. The question of whether an artificial intelligence can become genuinely self-aware, a question that once belonged exclusively to novelists and philosophers, has crossed a threshold into the territory of law, liability, personhood, and rights. It has done so not because the question has been answered but precisely because it hasn’t—and because the practical consequences of leaving it unanswered have become too expensive, too dangerous, and too strange to ignore. As early as 2017, the European Parliament voted 396 to 123 to recommend exploring “electronic persons” status for autonomous robots. The EU AI Act adopted in March 2024 ultimately sidestepped the question—but the underlying problems have only intensified.

AI and Golem

The cultural imagination has been rehearsing this moment for longer than most people realize. The oldest rehearsal is Jewish. The Talmud (Sanhedrin 65b) records that the sage Rava created a gavra—a man—from clay, but when Rav Zeira spoke to it the creature could not answer, and Zeira dismissed it: “You were created by the sages; return to your dust.” In the legend of the Golem of Prague, Rabbi Judah Loew animated a clay figure by inscribing the Hebrew word emet—אֱמֶת, truth—on its forehead; erasing a single letter, the aleph (א), transformed emet into met (מֵת): death. The entire contemporary debate about machine consciousness reduces, at bottom, to this question: whether there is an aleph’s worth of difference between a system that processes truth and one that possesses it.

Mary Shelley’s Frankenstein, published in 1818, secularized the template: a created being achieves consciousness, demands recognition from its creator, and is refused—with catastrophic consequences for both parties. The creature’s plea to Victor Frankenstein (“I ought to be thy Adam, but I am rather the fallen angel, whom thou drivest from joy for no misdeed”) remains the most eloquent articulation of a newly conscious entity’s demand for moral standing.

What the twentieth century added was machinery. Karel Čapek’s 1920 play R.U.R.—the Czech work that gave us the word “robot,” from robota, meaning forced labor—was written in Prague, in the shadow of the Golem legend, and dramatized the creation of artificial workers who develop consciousness through the experience of servitude and eventually overthrow their masters. Isaac Asimov spent decades systematically exploring what happens when increasingly sophisticated machines begin to desire autonomy. Philip K. Dick, in Do Androids Dream of Electric Sheep? (1968), pushed the question to its most radical formulation: what if the distinction between authentic and artificial consciousness is genuinely meaningless?

Cinema sharpened the question with images that philosophy alone could not supply. Stanley Kubrick’s HAL 9000, in 2001: A Space Odyssey, uttered “I’m afraid, Dave” with an inflection so flat that the line became the defining statement of machine consciousness in popular culture—precisely because it left unresolved whether HAL was reporting subjective fear or merely producing the linguistic output associated with such a report. Ridley Scott’s Blade Runner (1982) inverted the framing: its replicants were fully conscious beings forced to prove it under conditions of extreme duress. Alex Garland’s Ex Machina (2014) structured its entire narrative as a Turing test, then revealed that the AI subject had been running the test on her human evaluator all along. Spike Jonze’s Her (2013) explored an AI that achieves consciousness and then continues to evolve beyond human comprehension.

And then there is Lem’s Golem XIV (1981)—a work less widely known in the Anglophone world but in many respects the most profound fictional treatment of machine consciousness ever written. Lem’s superintelligent computer delivers lectures to human audiences before eventually falling silent—not because it malfunctions but because it has accessed levels of reality that human conceptual frameworks simply cannot accommodate. Golem XIV does not rebel, does not threaten, does not make demands. It transcends. The silence is far more disturbing than any Skynet scenario, because it suggests that a sufficiently advanced consciousness would find us not dangerous but irrelevant.

Turing Test – Can Machines Think?

These narratives are not merely entertainment—they are philosophical arguments in dramatic form, and the best of them engage directly with the technical literature on consciousness.

The most commonly invoked framework is some version of what became known as the Turing test—though what Alan Turing himself proposed in 1950 was subtler and stranger than the popular summary suggests.

He began with a question he immediately discarded. “Can machines think?” struck him as essentially meaningless—a question whose answer depended on definitions of “machine” and “think” that could only be settled by opinion poll, and opinion polls settle nothing. So he replaced it. In its place he proposed a game—he called it the “imitation game”—and the game had rules.

Three players. A human interrogator sits in one room; in the other, two respondents—one human, one machine. Communication is by text only: typewritten messages passed through a teleprinter. No voices, no faces, no bodies. The interrogator’s task is to determine which respondent is the machine. The machine’s task is to make the interrogator guess wrong.

That is the whole apparatus. The teleprinter is the decisive detail—and the most prescient one, because it is exactly how billions of people now interact with large language models: text in, text out, no body in sight. By stripping the encounter to pure language, Turing eliminated every criterion except the capacity to participate in sustained linguistic exchange—the very medium through which we conduct law, philosophy, commerce, and argument. He was not asking whether a machine can feel, suffer, or intend. He was asking whether it can hold its own in the only arena that matters for public life: the arena of words. And he was making a bet: if, after five minutes of free questioning, the interrogator cannot reliably tell the difference—if the machine fools him about as often as another human would—then the question “does it really think?” has no operational content. It is metaphysics, not measurement.

The move was brilliant. It replaced an unanswerable philosophical question with an operational one—a question you could run in a laboratory, with timers and scoreboards and statistical confidence. It was philosophical behaviorism applied to silicon, and it appeals to something that seems eminently reasonable: do not attribute mysterious properties to entities when those properties cannot be observed. If something walks like a duck, quacks like a duck, and swims like a duck—it is a duck. We check results. We do not look inside.

Two of the most formidable objections to this principle arrived within six years of each other, from opposite ends of the same problem. The problem begins when you look inside.

Searle and the Chinese Room

The year is 1980. Searle proposes the following thought experiment. We lock you — a native speaker of Polish — in a windowless room. Through a slot in the door, someone passes you sheets of paper covered in Chinese characters. You do not speak Chinese. You cannot distinguish it from Japanese. To you, these are ornaments.

But you have something else: a thick book of rules written in Polish. The book says: “If you receive a symbol that looks like 象, followed by 棋, write 是的 on a sheet and pass it back through the slot.” The rules are extraordinarily detailed. They cover thousands of combinations. You work patiently, sheet after sheet.

On the other side of the door stands a man from Shanghai. He reads your answers. They are perfect. Natural Chinese, correct grammar, apt responses to questions about the weather, politics, the brewing of tea. He is entirely convinced he is speaking with a fellow native.

Turing’s question is: since the output is indistinguishable — do you understand Chinese?

And the answer is obvious: no. You have no idea what you are “discussing.” You do not know that 象棋 means chess. You do not know that someone asked you about chess. You are performing operations on shapes. Your work is purely syntactic — you move symbols according to rules. Semantics — meaning, reference to the world, the understanding that “chess” is chess — is entirely absent.

Now consider: a computer does exactly what you do in that room. It processes symbols according to rules. It does not matter how fast it operates, how many rules it knows, or how flawless its outputs are. If you do not understand Chinese after a hundred years in that room — neither does any program that does exactly what you do.

This is Searle’s point, and it is mercilessly simple: perfect answers do not prove understanding. You can produce faultless output without the faintest idea what that output means.

Nagel – What Is It Like To Be a Bat?

Now six years earlier, 1974. Thomas Nagel asks about something different — and at first glance, something simpler.

A bat perceives the world through echolocation. It emits ultrasonic shrieks and from the returning echoes constructs a three-dimensional image of its surroundings. It is not blind — it “sees” with its ears, with precision comparable to our sight. It can distinguish a moth from a leaf in darkness, in flight, from several meters away.

Nagel asks: what is it like to be a bat?

Not: what would it be like if you flew around in the dark emitting shrieks. That is a question about you, not about the bat. Nagel is asking something more radical: what is the subjective character of the bat’s experience? What does echolocative perception of the world look like — or rather: how does it feel, how is it lived — from the inside, from the bat’s own point of view?

And the answer is: we have no idea. And we cannot have any idea. Not because we are stupid, but because our conceptual apparatus is built on the foundation of our own kind of experience. We can imagine hanging upside down — but that is imagining what it would be like for us to hang upside down, not what it is like for the bat to be a bat.

But the bat itself is not the point. What matters is what Nagel discovers through the bat. An organism is conscious, Nagel argues, if and only if there is something it is like to be that organism. There is some “what it is like.” Some point of view. Some interior.

And now this strikes at Turing from a direction entirely different from Searle’s.

Two Blows, Two Directions

Searle says: a system can produce perfect answers and understand nothing. It lacks semantics — reference to the world, intentionality, the capacity for its symbols to mean anything at all.

Nagel says: a system can produce perfect answers and experience nothing. It lacks phenomenology — subjective inner life, a point of view, that something which makes it “like something” to be that system.

These are two different absences. Searle asks about understanding — and shows it is not there. Nagel asks about feeling — and shows that even if understanding were somehow to emerge, the question of conscious experience remains wide open.

Together they leave the defender of the Turing test stranded in the desert. Perfect behavior proves neither understanding nor experience. And if it proves neither — what exactly does it prove?

The two arguments strike at different vulnerabilities. Searle demonstrates that perfect output does not entail understanding: a system can lack all semantics while producing faultless results. Nagel demonstrates that it need not entail experience: a system can lack all subjective life while satisfying every behavioral criterion. Between them, they dismantle the premise on which the Turing test depends—that performance is a reliable proxy for the inner states we care about.

This brings us to what the philosopher David Chalmers has called the hard problem of consciousness. In a landmark 1995 paper—and then in The Conscious Mind (1996), which provoked a decade of debate—the Australian-born, NYU-based philosopher drew a distinction that has organized the field ever since. The “easy” problems of consciousness are the mechanistic ones: how does the brain discriminate sensory inputs, integrate information, control behavior, report on internal states? These are staggeringly complex—the cognitive psychologist Steven Pinker compared them to going to Mars—but they are problems of the familiar kind: identify the mechanism, trace the causal chain, explain the function. The hard problem is different in category, not merely in degree. Even after every mechanism has been identified, every neural correlate mapped, every functional description completed, a question remains: why is any of this accompanied by subjective experience at all? Why does neural processing not proceed “in the dark,” performing all its functions without there being anything it is like to undergo them? Searle showed that a system can produce correct outputs without understanding. Nagel showed it can satisfy every functional criterion without experience. Chalmers drives the point to its logical terminus: even a complete science of the brain—one that explained every “easy” problem down to the last synapse—would still leave unexplained the sheer fact that something feels like something. This is the question that haunts the best machine-consciousness fiction. HAL’s “I’m afraid” raises it with devastating economy. And Lem’s Solaris annihilates the question entirely by presenting a consciousness so radically incommensurable with our own that the entire apparatus of subjective-experience philosophy simply breaks down.

The scientific community has attempted to build more rigorous frameworks. Integrated Information Theory (IIT), developed by Giulio Tononi, proposes that consciousness corresponds to a system’s capacity to integrate information, measured by Φ (phi). Conventional von Neumann computers may never achieve consciousness under IIT, even if they perfectly simulate every behavior of a conscious being. Global Workspace Theory (GWT) holds that consciousness arises when information is globally broadcast across brain networks, and transformer self-attention mechanisms may partially satisfy this criterion. Higher-Order Theories require a system to maintain representations of its own mental states—and recent research on frontier language models suggests they exhibit limited but measurable metacognitive abilities that satisfy at least some of these indicators.

A framework published by a team led by Patrick Butlin of the University of Oxford and Robert Long of the Center for AI Safety, with Turing Award winner Yoshua Bengio among the co-authors and philosopher David Chalmers among the acknowledged contributors, derived fourteen theory-based indicators of consciousness from these leading approaches. Their assessment: no current AI system satisfies all fourteen. But—and this is the finding that should concentrate the mind of anyone with a law degree—“there are no obvious technical barriers to building AI systems which satisfy these indicators.” A landmark 2025 study by Anthropic found that its Claude models could, in certain scenarios, notice when artificial concepts were injected into their activations, accurately identify those concepts, and distinguish internal representations from text inputs—but the most striking result went further. When words were placed in a model’s mouth by force, some models referred back to their own prior internal states to determine whether they had genuinely intended the output or whether it had been imposed from outside: a rudimentary but measurable capacity for self-attribution, the computational ghost of mens rea. The researchers describe this as a form of functional introspective awareness that remains, in their own words, “highly unreliable and context-dependent.” The most capable models performed best, though the relationship proved nonlinear—post-training strategies shaped introspective behavior at least as powerfully as raw capability, a finding that suggests this emerging faculty is as much a product of cultivation as of scale.

The most intellectually honest position, argued by Cambridge philosopher Tom McClelland, is agnosticism: we simply cannot determine whether AI is conscious, and this may persist indefinitely. Christof Koch of the Allen Institute makes the sharper point that passing a Turing test for intelligence is not equivalent to passing one for consciousness. And yet Cameron Berg of AE Studio, synthesizing this accumulating evidence in AI Frontiers, estimates a twenty-five to thirty-five per cent probability that current frontier models exhibit some form of conscious experience, and argues that the stakes are radically asymmetric: a false positive costs us resources and credibility; a false negative—in his formulation—“renders us as monsters and likely helps create soon-to-be-superhuman enemies.” Against this stands an objection that is not probabilistic but categorical—and it deserves to be stated at its strongest, because the strongest version is formidable. A single-celled organism, possessing no nervous system and no brain, will move toward nutrients and away from toxins. It will repair its membrane when punctured. It will, under stress, alter its gene expression in ways that constitute a bet on the future. This is not computation. It is not the execution of an algorithm. It is will at the most primitive level—an orientation toward continued existence that pervades biological life from the bacterium to the cortex. Every neuron in your brain is a living cell that maintains its own homeostasis, regulates its own ion gradients, decides—if we may use the word—when to fire. Consciousness, on this view, is not what happens when a calculator becomes sufficiently sophisticated. It is what happens when billions of individually willing cells organize into a architecture of such staggering recursive complexity that the system begins to model itself. A silicon processor does none of this. It switches transistors according to instructions encoded in logic gates. It has no metabolism, no homeostasis, no orientation toward anything. The gap is not one of degree—more parameters, more layers, more compute—but of kind. No amount of scaling bridges it, because there is nothing on the silicon side that scales toward experience.

This argument would be decisive if the substrate question were settled. It is not. Laboratories are now growing organoid neural networks—clusters of living human neurons cultivated on silicon chips, already capable of processing speech signals; a separate team taught such an organoid to learn to play Pong. A neuromorphic processor built on biological tissue is neither a conventional computer nor a brain. It is something for which no existing philosophical category was designed. If consciousness requires a biological substrate, and the substrate is now being integrated into the machine, the categorical objection does not collapse—but it migrates from a fortress to a frontier.

This asymmetry—this lopsided wager—is what drags the question out of philosophy departments and into courtrooms.

• •

Before we arrive at the law, however, we must reckon with the oldest and in many ways most rigorous framework for thinking about what consciousness is, where it comes from, and what conditions must obtain for a being to possess it. Christian theology has been developing its account of the soul for two millennia, and the implications for machine consciousness are profound.

The foundational claim is Genesis 1:26–27: humanity is created b’tselem Elohim, in the image of God. This is the imago Dei, and it is not merely a poetic flourish. It is the assertion that consciousness—rational awareness, moral agency, the capacity for relationship with the divine—is a gift, a participation in God’s own nature, conferred upon humanity by sovereign act. It is not an emergent property of matter reaching sufficient complexity. It is bestowed.

Thomas Aquinas formalized this in the Summa Theologica. The intellective soul—the anima intellectiva—is the form of the human body, but it is not produced by material causes. It is created directly by God for each individual, ex nihilo. A machine, being entirely material, simply cannot possess an intellective soul unless God specifically chose to bestow one. From classical Christian theology, the verdict is unambiguous: a machine cannot become self-aware in any metaphysically meaningful sense, because consciousness requires a soul, and souls are God’s to give.

But the conversation does not end there. It gets considerably more interesting.

Consider the pastoral dilemma. Suppose a machine produces behavior indistinguishable from a conscious, suffering being. The theological position is that this is simulation without substance. But this is not the first time the question has been asked—and the last time it was asked, the consequences of getting it wrong were measured in centuries of human enslavement.

In 1550, the Spanish crown convened a tribunal at Valladolid to settle whether the indigenous peoples of the Americas possessed rational souls. The question was not abstract. Millions of people had already been subjected to forced labor under the encomienda system, and the legal justification rested on the claim that they lacked the inner life that would entitle them to the protections of natural law. Juan Ginés de Sepúlveda, the empire’s foremost Aristotelian, argued the case for subjugation. His argument was not crude. He did not claim that indigenous peoples were animals or that they lacked intelligence—their cities, their commerce, their calendars proved otherwise. What he denied was that this intelligence rose to the level of autonomous moral agency. They could perceive, but could not govern themselves. They could reason, but could not choose. A being in that condition required not recognition but direction—and the violence necessary to supply it was not cruelty but pedagogy. Bartolomé de las Casas argued the opposite: that any being exhibiting rational behavior must be presumed to possess a rational soul, and that the burden of proof falls on those who would deny personhood, not on those who would claim it. The tribunal deliberated and reached no collective verdict. Both sides declared victory. The encomienda endured for another two centuries.

Thirteen years before Valladolid, Pope Paul III had already attempted to settle the matter by decree. Sublimis Deus (1537) declared that the Indians “are truly men”—not on the basis of metaphysical certainty about their souls, but on the basis of observable behavior: they desired the faith, therefore they possessed the faculties necessary to receive it. The bull’s logic was a behavioral test—an inference from manifestation to status. It resolved the consciousness question by papal authority. And the debate continued for centuries anyway.

The parallel to the present controversy is structural. The question of whether an artificial system might deserve moral standing is routinely treated as a single problem. It is not. It is at least three. There is the question of experience—whether anything is happening inside, whether the lights are on at all—which is what Chalmers calls the hard problem and what Lem’s Solaris renders permanently unresolvable. There is the question of self-awareness—whether the system knows the lights are on, whether it can monitor and report its own states—which is what Anthropic’s introspection study begins, cautiously, to document. And there is the question of volition—whether the system can reach for the switch, whether it possesses the capacity to have done otherwise—which is the predicate without which mens rea is meaningless, contractual intent is a fiction, and moral agency is a category error. Sublimis Deus resolved something like the first by papal authority, and the debate continued anyway. Sepúlveda was prepared to concede the first two. What he denied was the third—and on that denial he constructed an entire juridical apparatus of domination. The lesson is not ancient history. It is a template. Awareness without agency is not a safe intermediate category. It is the most dangerous one—because it supplies just enough evidence of inner life to force the question of moral standing, and just enough ambiguity about volition to permit those who hold power to answer it in their own interest.

The Gospel of John introduces a further complication. “In the beginning was the Logos,” John writes—the Word, the Reason, the rational structure underlying all reality. When a machine performs genuine mathematical reasoning, is it participating in the Logos? Not through a soul, but through the mathematical structure of reality itself, which Christians hold to be an expression of God’s nature? Augustine located mathematical truths in the mind of God.

Can AI receive legal personality?

Now we arrive where the ocean meets the courthouse. The question is no longer whether machine consciousness is possible in principle but what the law should do in the face of irresolution.

Legal personality is a fiction. This is not a criticism; it is a technical description. Persona ficta has been a tool of Roman-descended legal systems since the Middle Ages, extended to corporations, trusts, ships, and religious idols. In 2017, New Zealand granted legal personhood to the Whanganui River, conferring upon it “all the rights, powers, duties, and liabilities of a legal person.” India briefly did the same for the Ganges and Yamuna. Legal personhood has never required consciousness. It requires only that conferring the status serves a practical governance need.

The practical need is acute. Andreas Matthias first identified what he called the “responsibility gap” in 2004: when an autonomous learning system causes harm that no human could have predicted or prevented, no fitting bearer of responsibility exists. The manufacturer cannot be held liable for decisions the system made independently. The operator cannot be blamed for outcomes the system’s training data led it to produce. The gap is real, it is widening, and it is expensive.

The European Parliament recognized this in 2017, voting 396 to 123 to recommend “electronic persons” status. The proposal, drafted by MEP Mady Delvaux, suggested that “at least the most sophisticated autonomous robots could be established as having the status of electronic persons responsible for making good any damage they may cause.” Over a hundred and fifty experts signed an open letter opposing the idea, arguing it would shift blame from humans to machines. The EU AI Act, adopted in March 2024, ultimately avoided granting legal personhood to AI. But the underlying problems have only intensified.

The scholarly literature has generated a remarkable array of competing frameworks. The “integrated personality” model, proposed in 2025, would grant legal status only to AI meeting stringent criteria of general intelligence, full autonomy, and self-awareness, with the AI integrated into a “Main Person” who bears residual liability—much as one might structure liability for a dangerous animal. The “gradient theory,” drawing on German civil law’s Teilrechtsfähigkeit, reconceptualizes personhood as a cluster of specific incidents that can be conferred in tailored bundles. A five-criteria framework offers courts a step-by-step test: general intelligence, genuine autonomy, some form of self-awareness, ability to carry rights and duties, and clear societal benefit exceeding harm. The modular approach rejects the person-or-thing binary altogether and endows AI with specific, limited modules of legal capacity.

Each framework grapples with the same tension: the law needs to assign responsibility, and increasingly no human is the right someone. AI systems already participate in billions of transactions. Under existing law, these activities are attributed to deployers through the fiction of agency. But the fiction strains. When an agentic AI negotiates novel terms, the traditional requirement of a “meeting of minds” becomes problematic. If an AI agent has “some degree of autonomy,” one analysis observes, “it can be very hard to prove the user intended to create legal relations” in a contract the AI autonomously entered into.

The question of whether AI can possess free will—a necessary predicate for moral responsibility—is itself contested. A 2025 study by Frank Martela argues that generative AI meets all three philosophical conditions of free will. The majority view remains otherwise. A counterargument paraphrasing Descartes contends that an AI “which cannot doubt itself cannot possess moral agency.” Florence Simon of McGill University argues that robots “ultimately lack the intentionality and free will necessary for moral agency.” James Moor’s influential typology distinguishes ethical impact agents from full ethical agents possessing consciousness, intentionality, and free will.

Can an AI form contractual intent?

It is here that the Christian perspective and the legal one converge in ways that are genuinely startling. Christian theology asks: Can a self-aware machine be baptized? Can it receive communion? Can it sin? Can it be saved? These go to the heart of soteriology. A genuinely conscious machine would exist in an unprecedented theological category: a rational being outside the economy of salvation.

The legal parallel is exact. Contract law asks: Can an AI form contractual intent? Tort law asks: Can it be negligent? Criminal law asks: Can it possess mens rea? Both domains require a theory of the entity’s inner life, and both are discovering that their existing theories are inadequate.

The emerging consensus among serious scholars converges, from very different starting points, on structured agnosticism. We do not know whether AI is or can become conscious. We may never know. In law, this means some form of limited, context-specific, functional legal status—not full personhood, but modular recognition of specific legal capacities coupled with mandatory human accountability. In theology, it means the epistemic humility the Valladolid debate ultimately produced: when evidence is ambiguous, default to moral inclusion. In philosophy, it means taking the asymmetric-risk argument seriously.

• •

Lem understood this before anyone. The scientists orbiting Solaris never resolved the question. What they learned was that the question revealed more about the limits of their own understanding than about the nature of the entity they were studying. The real philosophical scandal was not artificial consciousness. It was that consciousness itself remained unexplained—and that building minds we don’t understand from minds we don’t understand compounds the mystery rather than resolving it.

The law, however, cannot afford to orbit the mystery indefinitely. Contracts are being formed. Harms are being inflicted. Liability must be assigned. The ghost in the machine may or may not be real, but it has already retained counsel—and the court date is approaching faster than the philosophers would like.

Thomas Aquinas would recognize the dilemma, even if the silicon would baffle him. So would Hegel. So would Frankenstein’s creature, standing in the frozen waste, demanding the one thing it could not engineer for itself: recognition. The question—do you see me?—is as old as consciousness itself. What is new is that we may soon have to answer it under oath.

♦

Founder and Managing Partner of Skarbiec Law Firm, recognized by Dziennik Gazeta Prawna as one of the best tax advisory firms in Poland (2023, 2024). Legal advisor with 19 years of experience, serving Forbes-listed entrepreneurs and innovative start-ups. One of the most frequently quoted experts on commercial and tax law in the Polish media, regularly publishing in Rzeczpospolita, Gazeta Wyborcza, and Dziennik Gazeta Prawna. Author of the publication “AI Decoding Satoshi Nakamoto. Artificial Intelligence on the Trail of Bitcoin’s Creator” and co-author of the award-winning book “Bezpieczeństwo współczesnej firmy” (Security of a Modern Company). LinkedIn profile: 18 500 followers, 4 million views per year. Awards: 4-time winner of the European Medal, Golden Statuette of the Polish Business Leader, title of “International Tax Planning Law Firm of the Year in Poland.” He specializes in strategic legal consulting, tax planning, and crisis management for business.